Research Report — Arqion Labs | July 2025

Most companies assume AI risk is theoretical. It’s not. It’s operational. It’s embedded. And it’s already active inside your walls.

At Arqion Labs, we spent the past 6 months mapping the real-world threats that generative AI introduces into enterprise environments. What we found was alarming: from intellectual property loss to regulatory landmines, the risks are not only real — they’re largely unaddressed.

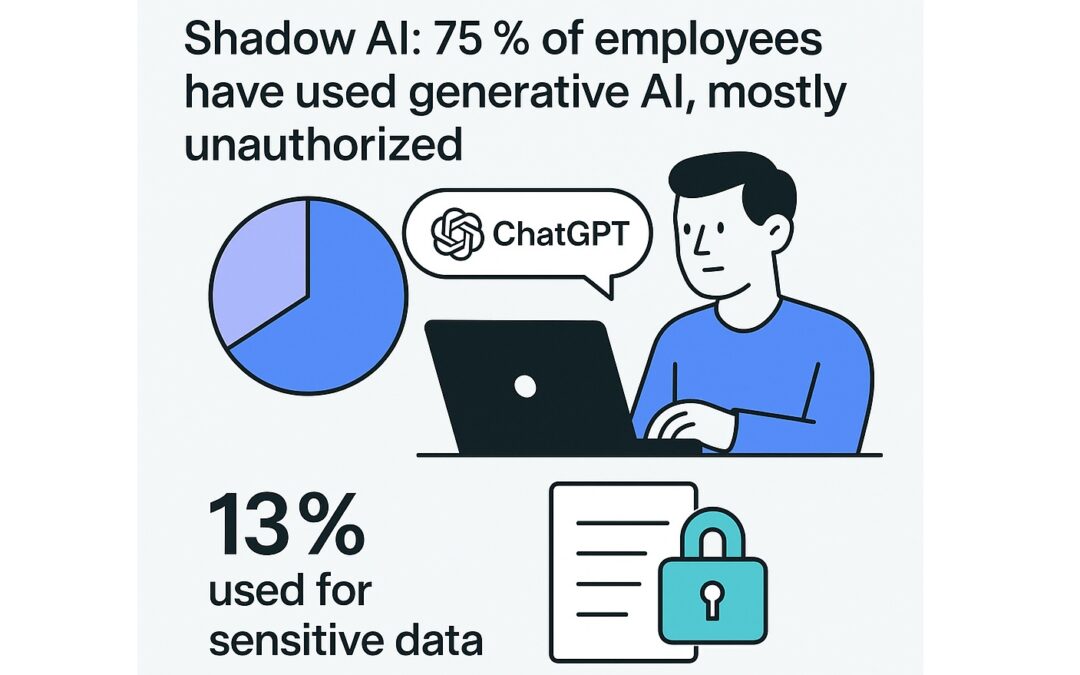

Employees are already using tools like ChatGPT and other public LLMs to write reports, summarize contracts, draft emails, debug code, analyze spreadsheets, and brainstorm content. This adoption isn’t hypothetical — it’s pervasive, and in most cases, completely unauthorized. Studies show that up to 75% of knowledge workers have used generative AI tools at work (Statista, 2024). Crucially, over 13% of those interactions include sensitive company data, including client information, PII, financial records, and legal documents (Lasso Security, 2024).

This behavior is often untracked and unapproved — what cybersecurity professionals now call “shadow AI”. Employees use their personal ChatGPT accounts or browser extensions, bypassing any IT policies or data loss prevention controls. And they may not even realize the risk. In a recent Cyberhaven study, 11% of prompts involved confidential materials, and no users reported realizing they were breaching policy.

From a security standpoint, every one of these prompts represents a potential exposure event. The submitted data is sent to third-party servers, retained under opaque terms, and possibly used to train future models. There is no visibility, no control, and no ability to recall what’s been shared. Yet in most organizations, this behavior goes entirely unnoticed — until the damage is already done.

🔎 Our Research: 20 AI Threats That No One Is Addressing in Full

We’ve categorized these into five domains:

⚠️ External Data Risks

- Data Exfiltration – Employees paste client or proprietary data into tools you don’t control. That data may be retained or leaked.

- Regulatory Non-Compliance – Untracked AI use can violate HIPAA, GDPR, FERPA, PCI DSS, etc.

- Third-Party API Abuse – Many AI plugins and browser tools reroute data to unknown destinations.

- Legal Discovery Risk – Any AI-assisted document could be discoverable in court without proper audit logs.

🔓 Internal Process Risks

- Shadow AI Use – Employees use ChatGPT with personal accounts. No policy. No visibility.

- Accidental Data Leakage – Well-meaning staff paste internal documents, passwords, or emails into prompts.

- Lack of Auditability – No per-user logging means no forensics after a bad decision or breach.

- Unenforced Policy – Written policies mean nothing without technical enforcement.

🧠 AI-Specific Technical Risks

- Prompt Injection Attacks – Attackers override system instructions using clever prompt design.

- Model Hallucinations – LLMs fabricate facts, case law, or citations that mislead users.

- Indirect Prompt Leaks – Data from one user’s prompt is reused in another session.

- Prompt Replay or Session Hijack – Without authentication, session reuse can expose data.

- Training Data Contamination – Public models can incorporate your data into future generations.

💣 Emerging AI Exploit Risks

- AI-to-AI Exploits – Malicious LLM agents poisoning or manipulating other systems.

- Credential Leakage – Staff paste passwords or API keys into prompts for debugging.

- Agent Chain Risk – Agent-based tools amplify risks by chaining multiple steps across platforms.

- Legal Gray Zones in AI Output – Unclear IP ownership and liability in AI-generated content.

- Semantic Retention Cross-Leak – Shared embeddings could expose patterns from other orgs.

- Output Contamination – Outputs could inadvertently include confidential or protected data.

- TOS Mismatch Liability – Employees using ChatGPT web may accept terms that waive your protections.

The IP Problem: How AI Can Unintentionally Void Trade Secrets

One of the most overlooked dangers? Loss of intellectual property protection.

U.S. law requires “reasonable efforts” to maintain confidentiality in order to uphold trade secret status. When employees paste internal code, formulas, or strategy decks into public LLMs, they may unintentionally forfeit protection — permanently.

Once trade secret status is lost, your legal claim to exclusivity is gone. Worse, since public models like ChatGPT use different terms of service on personal accounts, employees may unknowingly authorize open-ended data retention or reuse.

These risks aren’t speculative. Samsung, Amazon, JPMorgan, and others have already banned internal ChatGPT use after leaks and near-misses.

🛡 The AEGIS Response: A Research‑Driven, Full-Spectrum AI Security Platform

While dozens of point solutions exist, none address all 20 threats outlined above. AEGIS was engineered from the ground up to do exactly that.

| Threat Domain | AEGIS Protection |

|---|---|

| Shadow AI Use | Authenticated, logged interface replaces public tools |

| Sensitive Input Data | Redaction pipeline strips PII, IP, and secrets pre-LLM |

| Model Hallucinations | Policy-enforced human review workflows + output logs |

| Prompt Injection | Filtered context + injection sanitization per session |

| Regulatory Risk | Audit logs, encryption, session isolation, BAA-compliant |

| IP Ownership | Use of private OpenAI API under enterprise terms |

| Session Hijack | Per-user sessions, replay protection, full encryption |

| Credential Leakage | Regex input screening, blocked tokens, warning prompts |

AEGIS isn’t just software — it’s a forward-deployed solution. Each deployment includes a trained AI Security Engineer to help you:

- Define and enforce AI usage policies

- Configure secure workflows across departments

- Audit past use and harden future access

- Stay ahead of emerging LLM exploit techniques

Final Word: AI Use Without AI Governance Is Risk By Default

Organizations that don’t take proactive AI security measures are gambling with their data, their clients, and their IP.

You can’t afford to be blind to the 20+ ways AI is already exposing your organization to risk. AEGIS gives you the visibility, control, and enforcement you need — before regulators, clients, or competitors force the issue.

Secure the upside of AI. Lock down the downside.

That’s what AEGIS was built for.